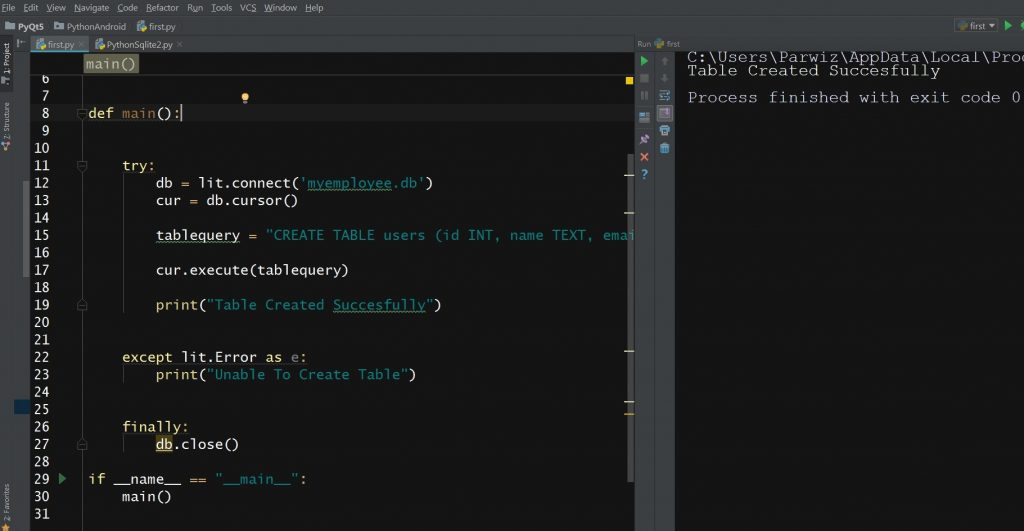

For simplicity, let's use an SQLite database as an example. Remember to specify the database connection URL and type. This engine facilitates smooth communication between Python and the database, enabling SQL query execution and diverse operations.

To convert a DataFrame into SQL, create an SQL database engine using SQLAlchemy. As a concluding step, the code proceeds to print the DataFrame df, resulting in the output showcased above. The values for each column are populated from the respective lists within the dictionary. This DataFrame is structured with three distinct columns, namely 'Name', 'Age', and 'Department'. In the provided code snippet, a pandas DataFrame called df is created by utilizing a dictionary named data as the data source. We can define the DataFrame using the following code snippet: Example data = In this example, we'll work with a DataFrame containing employee information. Moving forward, let's create sample pandas DataFrame that we can convert into an SQL database. To get started, import the pandas and SQLAlchemy modules into your Python script or Jupyter Notebook: import pandas as pd These commands will download and install the pandas and SQLAlchemy libraries, allowing you to proceed with converting a pandas DataFrame into SQL. After installation, we can easily import and use these libraries in our Python programs. We use pip, a package manager bundled with Python, to download and install external libraries from PyPI. These libraries simplify code development by providing pre−written functions and tools. In this step, we ensure that we have pandas and SQLAlchemy libraries installed in our Python environment. This versatility empowers us to adapt to different use cases and effortlessly establish connections with the desired database engine. SQLAlchemy serves as a library that offers a database-agnostic interface, allowing us to interact with various SQL databases like SQLite, MySQL, PostgreSQL, and more. In this article, we will explore the process of transforming a pandas DataFrame into SQL using the influential SQLAlchemy library in Python. This conversion enables deeper analysis and seamless integration with diverse systems. While pandas excel at efficiently managing data, there are circumstances where converting a pandas DataFrame into an SQL database becomes essential.

As a result, it may be less up-to-date compared to other components in your stack.The pandas library in Python is highly regarded for its robust data manipulation and analysis capabilities, equipping users with powerful tools to handle structured data. It's important to be aware that Optimus is currently under active development, and its last official release was in 2020. Moreover, Optimus includes processors designed to handle common real-world data types such as email addresses and URLs. These accessors make various tasks much easier to perform.įor example, you can sort a DataFrame, filter it based on column values, change data using specific criteria, or narrow down operations based on certain conditions. The data manipulation API in Optimus is like Pandas, but it offers more. You can load from and save back to Arrow, Parquet, Excel, various common database sources, or flat-file formats like CSV and JSON. Optimus can use Pandas, Dask, CUDF (and Dask + CUDF), Vaex, or Spark as its underlying data engine. Optimus is an all-in-one toolset designed to load, explore, cleanse, and write data back to various data sources. Cleaning and preparing data for DataFrame-centric projects can be one of the less enviable tasks.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed